Article

Beyond the Device: Where MedTech Digital Ecosystems Are Starting to Pay Off

This blog contains Part 1 of the Cybersecurity for Software as a Medical Device blog series, which featured an interview with Bruce Parr, a DevSecOps leader and innovator at Paylocity. The following are links to each part of this blog series:

DevSecOps (Development Security Operations) is a key modern engineering method for getting ahead of security and moving it into the normal flow of development. The Software as a Service industry has been a pioneer of DevSecOps as a result of their nature as cloud-first and digital-first businesses. MedTech has a tremendous amount to learn about DevSecOps from SaaS companies, so Orthogonal sought out Bruce Parr, an expert who has been on a multi-year journey at the fast-growing and well-regarded Paylocity. As a payroll and HR SaaS solution, Paylocity has a lot of highly personal data to protect.

In this wide-ranging conversation, Bruce walked us through Paylocity’s DevSecOps journey: what they’ve learned about it, how they’ve implemented it and what the results have been. In turn, we’ve pointed out the connections for MedTech and connected medical devices, what we can learn from, and what we need to do to adapt these practices to our industry’s unique devices and user base.

When we started this conversation, we thought it would be more technically focused. But it turned out to be a conversation about how a blend of process and leadership shape security in software applications.

Many thanks to Bruce Parr for an excellent interview!

An additional editorial note from Orthogonal: In the period between when this interview was conducted in late 2021 and when it was published on Orthogonal’s website in January 2022, two huge news stories that broke related to cybersecurity issues:

While this interview occurred before those events made the news, in Randy and Brett’s view, they add further credence to the value of what Bruce Parr shared with us in this interview.

Cybersecurity is on everyone’s mind. As it should be – when your company works with safety-critical infrastructure systems that lives depend on, keeping those systems secure is essential to doing business. But creating a truly secure piece of software when that software is doing complex, life-sustaining things, such as monitoring someone’s health or treating a chronic condition, is challenging. Software as a Medical Device (SaMD), connected medical devices and Digital Therapeutics (DTx) have the potential to move the needle on positive healthcare outcomes and bend the cost curve, but to succeed they must be able to withstand the real possibility of cybersecurity attacks. Recall the epic breach of Yahoo in 2016 where 500 million users accounts were plundered of private information, only recently outdone by the Facebook data breach of 533 million accounts in 2021.

At Orthogonal, we’ve taken up the challenge of finding a way to make software security “in the flow” of what we do and not an extra step at the end. Patching up security issues at the end of the development process is like trying to fix a car with defects after it has come off the line, often requiring SaMD remediation services to address underlying issues. What we really should be doing, as Total Quality Management and Continuous Quality Improvement teach us, is fixing the upstream so these defects do not happen again.

With this all top of mind, we happened upon a LinkedIn post from someone with serious software engineering chops as both a technologist and a leader. Bruce Parr is a Pittsburgh-based software professional who has worked for several years at the Chicago-based company Paylocity: a major digital upstart in the HR and payroll software space. Bruce is a senior DevSecOps engineer, a relatively new job title in the software world. Software enterprises like Paylocity have started developing DevSecOps as an extension of their preexisting DevOps engineers.

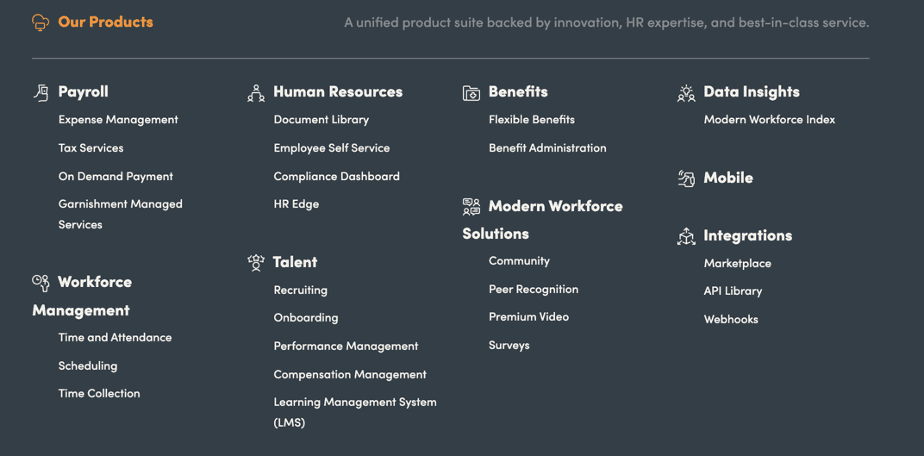

Paylocity has demonstrated a sustained track record for continued growth. According to the data we found in LinkedIn about their employee base, they have approximately 4,100 employees, having grown from 2,000 employees over the last several years. They have an impressive number of software-based products they sell, each of which requires significant systems engineering capabilities.

This was the post that grabbed us: https://www.linkedin.com/posts/activity-6844643374968926209-gyWH/

Click on the image to view the actual post.

Bruce’s post made us wonder: How on earth did Paylocity’s information security team channel their inner Tom Sawyers, so to speak, and got their engineers and DevOps professionals to paint the white picket fence[3] of taking proactive ownership of the security of their own code? How did they succeed in creating a culture with supporting tools and processes, where security became everyone’s problem, and not just the problem of the full time security team?

Given the high (and ever-increasing) importance of security in the medical device space, and the growing awareness of software-based security threats on the rise, we wanted to know more. So we set up a call with Bruce to discuss this topic and see what we could learn from him. Suspecting that it was going to be a rich conversation, we recorded the call. What we present here is a transcript of our expert interview.[4]

Joining Bruce from our team at Orthogonal were Randy Horton, Orthogonal’s VP of Solutions and Partnerships and Brett Stewart, a Principal Solutions Architect at Orthogonal.

Randy:

Bruce, thanks so much for taking the time to sit down with us. We wanted to talk to you and tap into your brain because right now, in the world of medical devices, there are a lot of people with a stake in the cybersecurity of their devices. These are people in organizations creating medical devices who work across software, hardware, R&D, commercialization, quality, patient safety, clinical trials and regulatory affairs just to name a few. And they are all wondering some version of the same questions,

So Bruce, Paylocity is a pretty serious Software as a Service (SaaS) software company. Can you tell us a little about why you take security so seriously, and how you addressed these issues?

Bruce:

Paylocity hosts and processes a lot of Personally Identifiable Information (PII), Company, HR, and Employee Confidential data, and payroll data within our system. There’s a whole range of bad things that could result from a security breach of our software and data:

Randy:

So, to open things up, security is about protection. What’s the nightmare scenario that you would point to at Paylocity and say, ”That’s not going to happen here on our watch.”

Bruce:

Without going into the kind of detail that’s going to give anyone any ideas or insights to make it easier for them to target us, there have been a few different nightmare scenarios that have happened elsewhere that are the kinds of things that we work hard every day to avoid.

Just think about all of the system breaches that have happened that have exposed lots of sensitive information: Yahoo, Equifax and Facebook, for example. Big companies with a lot of data to secure. We work hard to protect against those kinds of breaches.

Randy:

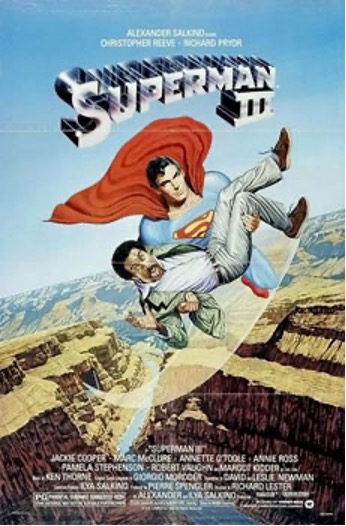

If you want to get more into elaborate security risks… I’ll date myself a bit. In 1983, the comedian Richard Pryor played a computer programmer working for the bad guy in Superman III. His plotline in the movie, I later found out, was based on a true story. A guy who ran payroll systems found out that a lot of people were not getting paid a half penny a paycheck due to a formula used in payroll calculations. So this payroll guy wrote a program to pay himself all those half pennies, and ended up getting a big check. They call this technique “salami slicing.”[5]

Image from Wikipedia[6]

Brett:

There’s certainly been a lot of unemployment insurance fraud the last few years, where people start getting letters from their State Unemployment Office saying you have filed for unemployment[7] when you’re fully employed and working hard every day. It certainly causes a lot of headaches for everyone involved while it gets sorted out. And a lot of taxpayer money and company money and stress is wasted.

Bruce:

That is a very good illustration of this risk. I even got hit with it. One of my HR reps even reached out to me and asked if I had filed for unemployment.

One more example, if you want to get a little more “real world meets Hollywood plotline”: A few years ago, hackers working for the Chinese government accessed a critical personnel database of the US federal government.[8] Those hackers got access to millions of background checks containing the private information of people working in the government, which had all kinds of national security implications. It raised the possibility of a foreign government targeting US federal employees and contractors for blackmail using confidential information about them.

Brett:

When you compare medical device software to payroll software, it’s not like the code that operates on the human body, but there’s a lot of risk here to individuals and organizations on a lot of levels. Even if you aren’t regulated the same way that we are by things like ISO 13485:2016[9] and IEC 62304:2006,[10] for example, you have a lot at stake if there are security issues.

Randy:

Bruce, when I called you to set up this interview, you had said that one of the key challenges for security at Paylocity is that the enterprise has been on a constant growth path, and that if anything the scale of what has to be secured keeps growing and growing.

Bruce:

Definitely. Paylocity has grown to a headcount of over 4,100 employees in 2021. Software engineering makes up a significant portion of the overall employee base. Those people work across a whole range of Paylocity products and services, each of which in turn runs on a very large and modular code base.

Just to give some perspective, when I joined the company as a software engineer six and a half years ago, we had one monolithic group of engineers. They were broken out into sub-teams working on different projects, but it was just basically one big group of sub-teams all working on essentially the same product.

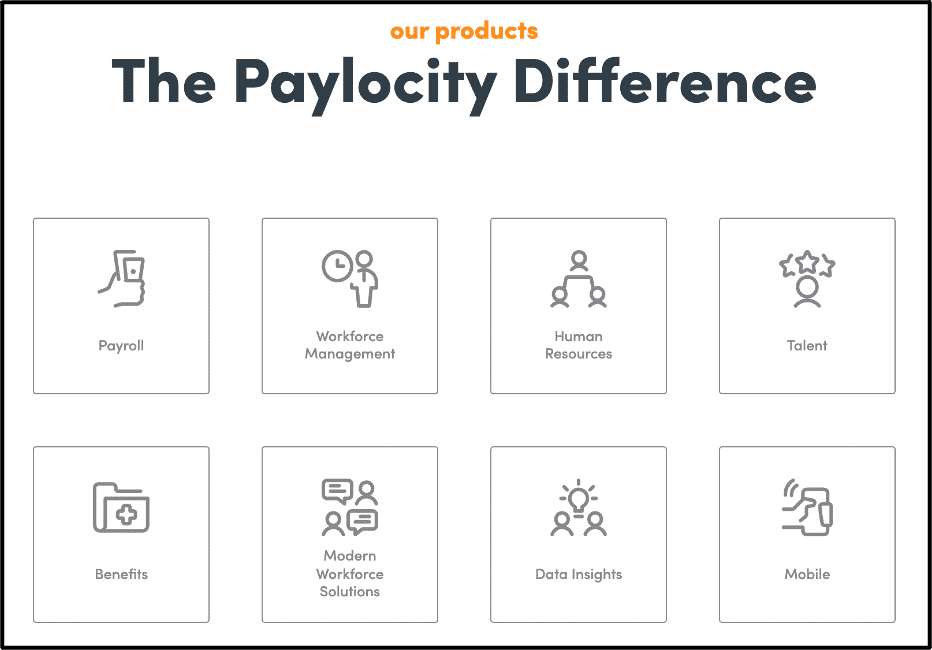

Then about five and a half years ago, we spun out the first team from this big engineering group and assigned them to specifically support a standalone product category. That worked well, so we kept spinning out groups. From an organizational perspective, we have a multitude of separate teams supporting six or seven different categories at this point.

Source: Paylocity Products [11]

Another way to think about our growth and scale as a software company is to look at our code base. We’re probably pushing upwards of several thousand code repositories across Paylocity that includes internal and external facing projects – that doesn’t even count the external dependencies we have on open-source software libraries.

Randy:

So how do you go about ensuring security across thousands of proprietary code repositories? That sounds like it could be a real headache on the best of days.

Bruce:

When this gets discussed in security circles, we use the acronym CIA: Confidentiality, Integrity and Availability. For the C in CIA, Confidentiality, I believe the data we protect – payroll and HR – is just as important to keep confidential as what you do in regards to HIPAA and privacy of medical information. That data is sacrosanct. It’s especially true of Personally Identifiable Information and HR Sensitive data, because somebody’s reviewing data that could be embarrassing if it’s released. The ultimate goal of a security organization is to protect data

Brett:

You’ve got it just right.

That’s a great Acronym and we agree: Medical data, like financial data, is highly sensitive and must be secured with proper access controls, processes, and training.

Bruce:

In terms of Integrity, the I in CIA, for a payroll company, we make sure people get paid, they get paid on time, they get paid the right amount, and their taxes are correct. Their benefits too – 401(k) and all of that fun stuff. That data has to be spot on, dead set accurate, because the money has to go to a particular bank account. We also have to make sure that the taxes go to the appropriate taxing bodies. And if any of that information is changed, we know who made the changes and when.

I’m not in medical devices, but I can imagine that in your space, integrity is also crucial. It’s just that there are different types of consequences from messing with data integrity. I would imagine it’s a different SLA (service level agreement), but you have things where accurate data has to be provided to a medical device, like an insulin pump or pacemaker, within a certain amount of time – maybe even in milliseconds. A small variation in the data could really hurt someone, and a big variation could harm them permanently. Even 10 points off on a glucose measurement from a continuous glucose monitor could be life-threatening.

Now for Availability, the last of the CIA letters. For us, the payroll system has to run, and it has to run on time. While I can’t give out exact numbers, you can assume Paylocity probably has thousands of clients that have to be able to access the system when they need it, how they need it. That’s no different than a medical device. You have to count on its availability.

Brett:

I would imagine that if somebody breached you and took down your entire system, and you couldn’t run payroll for a month for your clients, that might actually be big enough to show as a blip in the GDP. You would actually be able to see that data point.

Bruce:

I would imagine that.

You talked about the medical devices and some of the stuff that you just mentioned. What it really all boils down to is proper authentication and authorization controls. It’s not just about authenticating to our system or in your case, authenticating to the medical device.

For us it’s also a matter of approvals and audits in our system. Just authenticating to the system isn’t enough. We also have to know who the user is authorizing in the system so that we can confirm that they have authorization to use the particular function. And approvals are crucial so no one person can do certain actions, like change somebody’s position or change somebody’s role, without a series of approvals from multiple people.

Those are the kinds of access security methods that I imagine would be beneficial with a medical device, if they don’t already exist. For example, for somebody to go in and change the configuration on a medical device, that would not just be somebody with credentials, but also probably require a multi-factor authentication or something of that nature.

This blog contains Part 1 of the Cybersecurity for Software as a Medical Device blog series, which featured an interview with Bruce Parr, a DevSecOps leader and innovator at Paylocity. The following are links to each part of this blog series:

Bruce Parr, DevSecOps Team Lead at Paylocity

Bruce Parr is a software engineer with a passion for finding solutions to difficult challenges in the world of cybersecurity. As a software engineer and the manager of the DevSecOps team at Paylocity, he spearheads the technical and organizational culture shift around adopting security measures as part of the product development process.

Randy Horton, VP, Solutions and Partnerships

Randy has spent over a decade working with healthcare and life sciences organizations to tackle the Quadruple Aim: 1) optimizing health system performance by improving the individual experience of care, 2) advancing the health of populations, 3) reducing the per capita cost of care, and 4) enhancing the work-life of those who deliver care.

Brett Stewart, Principal Solutions Architect

Brett is a technology architect responsible for solutions engineering for a wide range of SaMD client projects and system architectures at Orthogonal. He brings over 20 years of experience delivering enterprise and mobile software solutions in industries ranging from diabetes care to national intelligence, where he developed his extensive engineering knowledge.

References: 1. Korn J. Kronos ransomware attack could impact employee paychecks and timesheets for weeks. CNN. https://www.cnn.com/2021/12/16/tech/kronos-ransomware-attack/index.html. Published 2021. Accessed January 18, 2022. 2. Rimol M. Log4j Vulnerability: What Security Leaders Need To Know and Do. Gartner. https://www.gartner.com/en/articles/what-security-leaders-need-to-know-and-do-about-the-log4j-vulnerability. Published 2021. Accessed January 18, 2022. 3. Tom Sawyer Whitewashing the Fence | Mark Twain | Ken Burns | PBS. Mark Twain | Ken Burns | PBS. https://www.pbs.org/kenburns/mark-twain/tom-sawyer/. Accessed January 18, 2022. 4. This conversation transcript has been edited for clarity, a streamlined storyline and also for brevity. 5. Mikkelson D. The Salami Embezzlement Technique. Snopes.com. https://www.snopes.com/fact-check/the-salami-technique/. Published 2001. Accessed January 18, 2022. 6. Superman III - Wikipedia. En.wikipedia.org. https://en.wikipedia.org/wiki/Superman_III. Accessed January 18, 2022. 7. Knowles J, Pistone A. Illinois IDES unemployment fraud reports top 1 million; how the scam became widespread. ABC7 Chicago. https://abc7chicago.com/ides-unemployment-in-illinois-fraud-report/10367159/. Published 2021. Accessed January 18, 2022. 8. Fruhlinger J. The OPM hack explained: Bad security practices meet China's Captain America. CSO Online. https://www.csoonline.com/article/3318238/the-opm-hack-explained-bad-security-practices-meet-chinas-captain-america.html. Published 2020. Accessed January 18, 2022. 9. ISO 13485:2016. ISO. https://www.iso.org/standard/59752.html. Published 2016. Accessed January 18, 2022. 10. IEC 62304:2006. ISO. https://www.iso.org/standard/38421.html. Published 2006. Accessed January 18, 2022. 11. HR and Payroll Solutions. Paylocity.com. https://www.paylocity.com/our-products/. Accessed January 18, 2022.

Related Posts

Article

Beyond the Device: Where MedTech Digital Ecosystems Are Starting to Pay Off

Article

Why MedTech Teams Slow Down as Software Grows and What Better Architecture Looks Like

Article

What Actually Works in MedTech, According to Industry Leaders

Article

Roundtable Chapter 2 – The One With the Bottleneck at the Finish Line