Article

Why MedTech Teams Slow Down as Software Grows and What Better Architecture Looks Like

Orthogonal is pleased to publish this guest blog by Scott Hanson, an industry expert on healthcare cybersecurity at MedSec, a medical device security consultancy. Scott and I met when we were both speaking at MD&M Minneapolis back in November 2021.

At that conference, I co-presented a talk with Pat Baird from Philips. We spoke about a proposed new approach to validating medical devices when key components of the technology are not fully under the control of the medical device manufacturer. For example, when part or all of a medical device operates on a public cloud such as Amazon Web Services, Microsoft Azure or Google Cloud; when the device sits on a smartphone selected, owned and patched by the patient; when the device interfaces with a 3rd party information system such as an AI/ML algorithm, an EHR system or a clinical decision support system.

The basic premise of this work is now embodied in AAMI CR510:2021, Appropriate use of public cloud computing for quality systems and medical devices. This Consensus Report has considered the benefits of cloud computing and determined that the best that can be achieved is an intermittently validated state. It makes the case that by following the Six Key Recommendations for responsibly embracing the cloud to support the operation of a medical device laid out in the CR, medical device software manufacturers can manage the uncertainty of the cloud without compromising device safety or function.

CR510 is serving as the framework for an in-progress AAMI Technical Information Report (TIR#115). It’s also being examined by many organizations looking at the use of cloud across regulated functions in MedTech, not limited to an actual medical device. We have nicknamed this broader work “TINY GVS” which stands for “This Is Not Your Grandmother’s Validated State” because it represents a significant, generational shift in how we think about validation and validated devices in our industry.

After our talk, Scott approached Pat and I and gave us an excellent example of how maintaining a validated state has been an issue in older medical devices, well before cloud computing. He described an in-hospital medical device that works with a stand-alone server (like ones supporting an MRI machine or ICU equipment). When there’s a critical security patch for that server, there’s significant pressure from hospital cybersecurity leaders to immediately apply security patches on the device’s OS, without waiting for the medical device manufacturer to approve that software patch or give guidance on how to properly apply it while not disrupting the functions of the medical device.

To give another example of how this is not only a new issue created by cloud computing: It’s now been multiple decades since clinics began installing Windows applications on PCs which were connected by a cable to a medical device. Back in the 1990s, we saw situations where someone installed an unauthorized screensaver application or video game on a computer that ended up disrupting the function of critical applications. The issue of TINY GVS is not new, but with the advent of cloud computing, it’s become a more significant concern.

With that, we are pleased to share Scott’s perspective on this issue.

“Hospitals should not patch the operating system or third-party libraries on their own medical devices[1].” That is what conventional wisdom would have us believe, based on Design Verification and Validation expectations in FDA Quality System Regulations[2]. Let’s discuss why we hear this phrase so often and weigh the real risks of patching medical devices without full validation by the manufacturer.

It’s common for modern medical devices, like MRI machines, to run on the same operating systems used in industry and the home.

It is increasingly common for modern medical devices, such as MRI machines, medication dispensers, and surgical navigation systems to run on widely available operating systems, such as Microsoft Windows, iOS, Linux, or Android. For example, an MRI machine likely has a multi-purpose computer physically held inside the actual machine. On top of running familiar operating systems and hardware, off-the-shelf software libraries, like the readily available OpenSSL, provide quick building blocks for devices, giving them modern security and dependable OS updates. Over time, though, the operating system and libraries must be maintained and updated to gain access to the latest security patches.

Timely patching of medical devices is critical to maintain their security throughout the entire usable lifespan of the device, since the security flaw being patched is quite possibly already being exploited by computer hackers. One of the biggest challenges facing any programmable electronic device is that the mean-time-to-patch is frequently longer than the mean-time-to-exploitation. The time needed to distribute and apply a patch, following any verification testing, further increases the time it takes from when a security flaw is discovered to when it is actually resolved (i.e., mitigated). The actual process of software patching is a waterfall process where each step must be completed serially and in a specific order.

Your local bank’s ATM might be running on a Windows PC with the same OS as your personal computer.

Medical devices are subject to rigorous testing by the manufacturer and typically this is based on a specific configuration and patch level of the operating system and third-party software libraries. For a medical device that uses a commodity operating system such as Microsoft Windows or Linux, that software configuration will often specify the operating system version and patch level. When a new patch is released, regression testing is performed by the manufacturer to make sure that that new patch doesn’t adversely affect the performance of the medical device.

This testing isn’t performed simply to satisfy regulatory requirements; there are real software risk management concerns when patching a medical device’s computer server. For example, an update may remove or change the underlying functionality of the operating system (e.g., deprecating a function in the programming interface to the OS);. a simple change which could cause the medical device to stop functioning. In other cases, the change might not be so obvious. In rare cases, a subtle timing change may be enough to impact the integrity of clinical data, or worse, unintentionally change diagnostic results or therapy parameters. To address these issues on all of the existing devices in use when a new patch needs to be deployed, manufacturers of devices are required to track individual devices and their configurations so impacted devices may be quickly located in the event of a recall.

In cases where the OS patch updates or changes an application programming interface (API), it may remove or change a function that is used by the medical device’s programming, causing the device to no longer operate correctly. While this is unlikely in the case of a security patch, it cannot be ruled out. It is reasonable for security patches to remove older cryptography or other functions that are found to be insecure. For devices that have not yet been updated to rely on newer capabilities, issues may be found after patching. This may be immediately apparent if the lost functionality was critical to device functionality. Issues may not be found until later if the functionality removed in the patch is only needed for a small part of the device’s workflow. Fortunately, loss of device functionality is detectable by exercising the device. Timing changes, however, are much more difficult to detect and are often only found through rigorous verification, validation, and stress testing performed by the manufacturer.

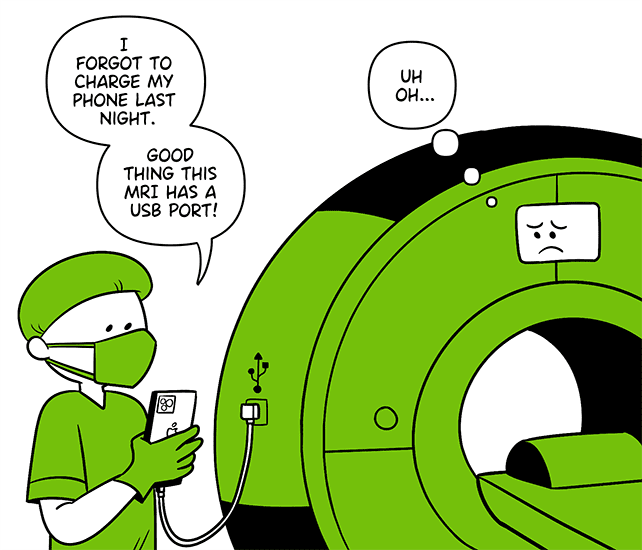

When hospital staff go a bit too far in interacting with major medical devices.

When a Healthcare Delivery Organization (HDO) applies an operating system, or other software patch, that wasn’t provided or approved by Medical Device Manufacture (MDM) that medical device is not validated. In this state, the device no longer matches the exact configuration that was validated by the MDM. A standoff may occur between the healthcare provider’s IT department and medical device manufacturer when a critical security risk necessitates patching the device prior to validation. For example, the EternalBlue exploit, developed by the U.S. NSA and later leaked by the Shadow Brokers, resulted in the worldwide WannaCry ransomware which impacted hospitals, including medical devices. Hospital IT was left, in many cases, to wait for medical device manufacturers to issue patches to protect against WannaCry. More recently, the Log4Shell vulnerability was published and provided remote code executing in software running Apache Log4j, a library frequently used by Java-based software which is also used by medical devices.

Network isolation of these medical devices is one of the few tools available today to healthcare providers for controlling broad risks to network connected medical devices. Hospitals that patch medical devices on their own before getting permission from the MDM, have adulterated those devices. When this happens, it is often expected that the HDO (not the MDM) is now responsible for any misbehavior of the device.

The TINY GVS approach says that we should design our devices to assume that there will be an urgent need for patches to the OS used within major medical devices and that this will recur over and over. Therefore, medical devices should be designed up-front to acknowledge that reality and mitigate those risks.

Designing for intermittent validation relies on the underlying assumption that a device will not always be in the exact configuration that was verified and validated by the MDM. Since this may impact device safety, availability, and recall, it is important that it is done carefully and purposefully. Supporting intermittent validation requires changes for both the medical device manufacturer and the healthcare provider. The medical device manufacturer must institute additional controls and integrity checks into their software to ensure that the device is still meeting its intended use and essential performance. The healthcare provider must assume additional responsibility for applying patches and tracking configurations of each device.

One approach is for healthcare providers to perform rigorous testing of the medical device following application of a security patch. Achieving intermittent validation requires the MDM to design the device such that it will be expected for their customers to apply security patches at times of their own choosing. It also requires that the healthcare provider have a process in-place for applying patches and performing testing in a controlled environment prior to putting the device back into service. For example, the HDO IT and biomedical departments could have a joint process where a sample device is pulled from service, patched, and functionality is verified by the biomed department. The device and all other similar devices would be patched once confidence has been established that the patch will not impact the availability or integrity of the device.

Another approach is field-level self-validation, where the medical device performs automated integrity checks at power up and then periodically performs re-checks while running to ensure the device is operating in an expected manner. Most medical devices already perform some degree of self-testing, though it may need to be expanded and made more robust in order to account for unforeseen issues that could be introduced by field patching of the device. A good example of this today is in medical mobile device applications that run on a smartphone with a variety of OS versions and neighboring applications. Medical device applications running on a smartphone can be developed to perform rigorous integrity checks to assure that the platform is stable and meeting the needs of the application. It is common for a medical device application to refuse to run if the mobile device operating system has been tampered with (e.g. rooted). Applying these same principles to purpose-built medical devices would pave the way for security patches to be applied by the healthcare provider and properly function in an intermittent validated state. In fact, there are already some medical devices that already operate in this model today.

Finally, while it’s the topic for a different blog if not an entire book, there is some relevance of this to the entire question of a Right to Repair. Given pressures from the Right to Repair’s supporters and the need for more rapid application of security patches, new models and processes are needed to provide flexibility and more rapid security updates for medical devices. These could also be used in situations where Right to Repair is forbidden legally by giving the manufacturer the ability to do field-level self-validation that detects unauthorized repairs that could cause harm.

With a little bit of TINY GVS, medical devices can quickly apply critical security patches without disrupting the safe and effective use for patient care.

Scott is a graduate of the University of Minnesota with a Masters of Business Administration from Carlson School of Management and bachelor’s degree in Electrical and Computer Engineering with a management minor.

MedSec is focused solely on cybersecurity for the Healthcare and Public Health Sector. We provide healthcare delivery organizations and medical device manufacturers with a holistic knowledge of cybersecurity—from design and development expertise, to regulatory and standards guidance, to implementation on the front lines of patient care.

1. Device manufacturers’ Instructions for Use (IFU) for regulated medical devices often state that any modification of the device voids all safety testing, essential performance, and warranty. If the hospital elects to modify the device, the IFU may state that the hospital becomes responsible for any malfunction or injury that results from the device. The reason given for this clause is because, once a hospital has modified a device independent of the manufacturer’s oversight, specific instructions and verification, it is no longer in a state that the manufacturer has guaranteed the device’s safety and efficacy. Devices that have had unauthorized case removal, access, or changes are treated by the manufacturer as Not for Human Use. 2. 21 CFR 820.30(f) Design Verification and 21 CFR 820.30(g) Design Validation require a manufacturer to confirm that the completed device meets the design requirements, user needs, and intended use. Further, 21 CFR 820.30(i) Design Changes, requires the manufacturer to document and test design changes prior to implementation.

Related Posts

Article

Why MedTech Teams Slow Down as Software Grows and What Better Architecture Looks Like

Article

What Actually Works in MedTech, According to Industry Leaders

Article

Roundtable Chapter 2 – The One With the Bottleneck at the Finish Line

Article

Roundtable Chapter 1 – The One Where the Device Was Only Half the Story